PowerShell File Processing

PowerShell is good for some things. The data object model makes for good functionality. But making all data objects makes PowerShell a dog for handling large data files. Often in our business we use both Windows and Linux to process the same data. Linux does not generally like the files PowerShell creates.

Text File Encoding.

To understand what is going on a little explanation is needed. By default if Text is redirected to a file in PowerShell the encoding is UCS-2 LE BOM.

PS>echo “This is a test” > test.txt

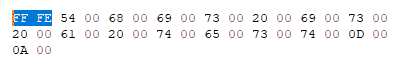

If you open this file in a text editor it looks like a normal file but if you open it in a hex editor. You can see first the BOM “Byte Order Mark” FF FE and then the text in Unicode. The BOM makes all Linux and Linux based utilities choke. E.g. Hashcat does not a like file with the BOM.

You can also encode a file in UCS-2 BE BOM. In that case the BOM is FE FF and byte order is reverse - the characters start with the 00. Most Linux utilities like plain UTF8 files with no BOM. UTF8 is often also called ASCII. UTF8 encoded files are also ½ the size of UCS-2 LE BOM encoded files because each character is only one byte long. Note sometimes UCS-2 is called UTF-16. To confuse matters more you can encode a file in UTF8 BOM.

How do you determine the text encoding of a file? You can always open it up in a hex editor and look at the first few bytes. Here is a table listing different BOMs.

| BOM | Encoding |

|---|---|

| EF BB BF | UTF8 BOM |

| FE FF | UTF16 Little Endian – UCS-2 LE BOM |

| FF FE | UTF16 Big Endian – UCS2 BE BOM |

| 00 00 FF FE | UTF32 Big Endian |

| FE FEF 00 00 | UTF32 Little Endian |

| 2B 2F | UTF7 |

| ASCII – UTF8 |

Confusing as this is, not being aware of file encoding can cause several issues with PowerShell. Unfortunately, PowerShell does not have an inbuilt function to detect file encoding. If the file is small enough to open in a text editor like notepad++ it will tell you the encoding. But then this would be irrelevant because you can easily search and manipulate the file. 99% of the time the files you encounter will be encoded either UTF8 or UCS-2 LE BOM. If you are having issues it is sometimes a good idea to look at the file encoding.

There is a PowerScript on my github to determine the file encoding Github

Changing File Encoding

PowerShell does have built-in functions you can use to change file encoding: out-file and append-content. For both of these commands you can add the -encoding parameter and change the encoding to one of unknown, string, unicode, bigendianunicode, utf8, utf7, utf32, ascii, default and oem. By now you should know that Unicode = UTF16 = UCS-2 LE BOM, UTF8 = UTF8 BOM etc. Why can’t they agree on one designation? Note: if you don’t designate the encoding the default in PowerShell is UCS-2 LE BOM.

Line Length

If you are not thoroughly confused at this point there is another quirk to know about PowerShell. By default the maximum line length is 260 characters. When PowerShell reaches this limit it wraps the line. Ugh. This behavior can be observed when using the redirect operators and when using all character out functions (like out-file) except append-content. Very often we deal with log files whose lines are longer than 260 characters. When we process them with PowerShell without consideration PowerShell wraps these lines messing up our log file.

Processing Large Text files in PowerShell

With these quirks and limitations in mind lets get on to processing large files with PowerShell.

Objects

For PowerShell everything is an object. This can be very useful, but it also introduces a lot of overhead. For doing simple file manipulation like sorting lines, splitting a file into parts, joining two files together, and getting the unique lines in a file PowerShell sucks. Luckily PowerShell has direct access to .NET file handling routines that allow PowerShell to handle files two to four times bigger than standard PowerShell and much faster.

I have a PowerShell library on github that uses the .Net routines to make file operations must faster.

If a file is read with the get-content function each line is read into memory as a separate object. This provides expanded processing capability but introduces overhead. A file can be read all at once as a single object using the built in .Net routines.

[Io.File]::ReadAllText($path)

Searching Large Text Files with Select-String

PowerShell has grep functionality built in with the Select-String function. It takes standard RegExp expressions, but it too has some quirks. By default if you search a file it lists each line that matches the RegEx preceded by the full name of the file, then the line number, then the line. I seldom want this. So searching a simple file with one word per line and matching the word ‘test’ will product output like this.

E:\somefolder\somefile.txt:134:test

Ugly! This can be fixed in one of two ways. The simple and not complete way. Is to only return the line.

(Sls ‘text’ f:\powershell\filelibrary).line | add-content -utf8 test.txt

Results in:

Library of text file handling routines using dot net routines for speed.

type text.txt | out-utf8 c:\example.txt

takes the text from text.txt and writes it to example.txt encoded utf8 no bom

$reader = [System.IO.File]::OpenText($inFile)

$reader = [System.IO.File]::OpenText($inFile)

$reader = [System.IO.File]::OpenText($inFile)

A little explanation of that construction. The parenthesis around sls 'text' f:\powershell\filelibrary.ps1 allows you to access any part of the select-string object that is returned. Here we only want the line

To see all the parts of the MatchInfo object returned feed the output of the Select-String command to get-member. You can see all the parts available

TypeName: Microsoft.PowerShell.Commands.MatchInfo

Name MemberType Definition

---- ---------- ----------

Equals Method bool Equals(System.Object obj)

GetHashCode Method int GetHashCode()

GetType Method type GetType()

RelativePath Method string RelativePath(string directory)

ToString Method string ToString(), string ToString(string directory)

Context Property Microsoft.PowerShell.Commands.MatchInfoContext Context {get;set;}

Filename Property string Filename {get;}

IgnoreCase Property bool IgnoreCase {get;set;}

Line Property string Line {get;set;}

LineNumber Property int LineNumber {get;set;}

Matches Property System.Text.RegularExpressions.Match[] Matches {get;set;}

Path Property string Path {get;set;}

Pattern Property string Pattern {get;set;}

Matches

Sometimes you want to only return the text that matches your regex and not the whole line. E.g. if you want to extract the IP addresses from a text file. The following line will do this.

sls '\d{1,3}\.\d{1,3}\.\d{1,3}\.\d{1,3}' -allmatches $filename | %{$_.matches.value}

gain sls returns match objects and we are selecting the value of each match.

-allmatches is used so it will get multiple IP address per line.

Regex Captures

Select-string supports capturing only part of the line that matches. You might want to do this if you want to extract parts of a line. Captures are designated by parenthesis and retrieved similar to the previous example.

sls '(\d{1,3}\.\d{1,3}\.\d{1,3}\.\d{1,3})' -allmatches $filename | %{$_.matches.captures[0].value}

Multiple captures are designated by the index to captures.

CSV Files

A large part of the files we process are csv files. PowerShell has good csv processing routines built in.

If you have a large csv log file and you only want to select parts of it use the import-csv and export-csv functions.

A command like this will filter by date.

Import.csv log.csv | where {$_.datetime -gt ‘2019-03-01 00:00’} | export-csv -notype filtered.csv

Another quirk of PowerShell is it insists on putting the type of csv in the first line of the csv unless you tell it not to. ☹ That is the reason for the -notype option (short for -NoTypeInformation) given to export-csv.

Processing a CSV in this way, PowerShell only processes one line at a time and does not run into size limitations.

If you want to filter on multiple fields you can do that with something like this:

Where {$_.datetime -gt ‘2019-03-01 00:00’ -and $_.method -eq ‘PUT’}

You can also pare down the log file by only selecting the fields you want. You can also reorder the fields for those stupid log files that put the datetime last.

Import-csv log.csv | select datetime, method, url | export-csv -notype output.csv

If you encounter a log file with non-standard delimiter you can designate that with the -delimiter argument.

Import-csv -delim ‘|’ log.csv

Or

Import-csv -delim "`t" log.csv

For a tsv

You can designate a header for a csv file with no header

Import-csv -header "field1","field2","Field3" log.csv

XML Files

PowerShell can process XML files natively. The [XML] type transforms XML content and returns an object.

[XML]somefile.xml will parse the xml file and return an object. You can then parse and select the output like a csv.

There are also four comandlets that allow you to process xml files ConvertTo-Xml, Export-Clixml, Import-Clixml and Select-Xml.

JSON Files

PowerShell has two Json commandlets 'ConvertFrom-Json and ConvertTo-Json' that process Json lines. With the limitation that they only process one line at a time they work well.